Autonomous Driving Tutorial

A Self Driving car simulation will be made in this post. You will understand how Genetic Algorithms and Neural Networks work in a practical way. The environment will be a 3D terrain with a track where the cars should learn how to drive.

INTRODUCTION

In this tutorials you will learn how to create a self driving simulator where the cars learn to drive using Darwin’s law of genetic

The project is created in Unity 3D using C# as programming language. You will learn how to design the map in Unity and the algorithms we implement. Also you will be able to program this algorithms in C#

The project can be divided in different aspects we will must focus on.

- Neural Network: we will be creating a multilayer neural network

- Genetic Algorithm with its respectives crossover and mutation functions

- Visual simulator in 3D with Unity: create your track

- Lasers: input data of the neural network

- Car movement: output actions of the network

- Camera: viewing the simulation in an intelligent way

- Controller: handles all the executions and the algorithms

UNDERSTANDING THE PROJECT

This video explains how all the components work. There are explained the Neural Network, Genetic Algorithm, Camera and Cars.

I explain the neccessary maths and algorithms for the simulator

NEURAL NETWORK

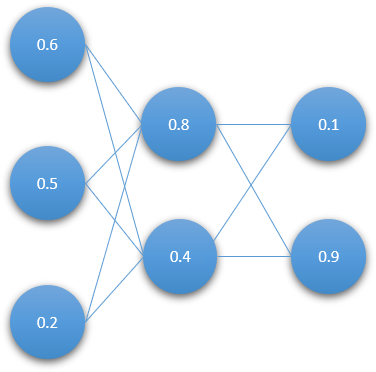

Neural Networks are computing systems that are used to relate different stimulus with different actions or possible solutions. A neural network is organized in layers and each layer will have a certain amount of neurons. This neurons will be connected to others with weights. Every neuron and weights will store a value. The weight values will be static when the network is being updated in our project. However, the neurons values will be changing during the simulator because they will depend on the input layer and the weights of the network.

In out project each car will have its own neural network and it will be updated every time. This updated algorithm is called feed-forward. The cars will have as the input layer the distances to the walls that surround the cars. With this inputs and the weights we will get some ouputs that would be the rotation and the acceleration.

We will need to put this into practice and create a class in C# called Neural Network that will contain as variables the List of float[][] of weights and the List of neurons organized in layers. It will have two contructors. One for the first generation of cars that will be random weights and the second for assigning the DNA to the weights. It will also have the feed-forward algorithm implemented. You can learn how to program the Neural Network in the next tutorial:

For a deep explanation of neural networks see Neural Networks and Feed-Forward Neural Networks

GENETIC ALGORITHM

Instead of using backpropagation for the Neural Network to learn, we will be using reinforcement learning. We will use the Genetic Algorithm applied to every cars. As the Darwin’s law says the entity (car) with the best genes will survive and it will propagate the genes to the next generation. They will evolve in a better way and survive more time.

If we want to express this matematically for out cars we should then start by creating for each car is own list of genes. There will be as much genes as the quantity of weights in the neural network of each car. When we create the array we will start by creating it in a random way in the first generation.

We can divide the Genetic Algorithm in different sections:

- Random Initialization of DNAs

- Selection of best cars depending on the fitness function

- Crossover of the genes of the parents

- Mutation the new DNA

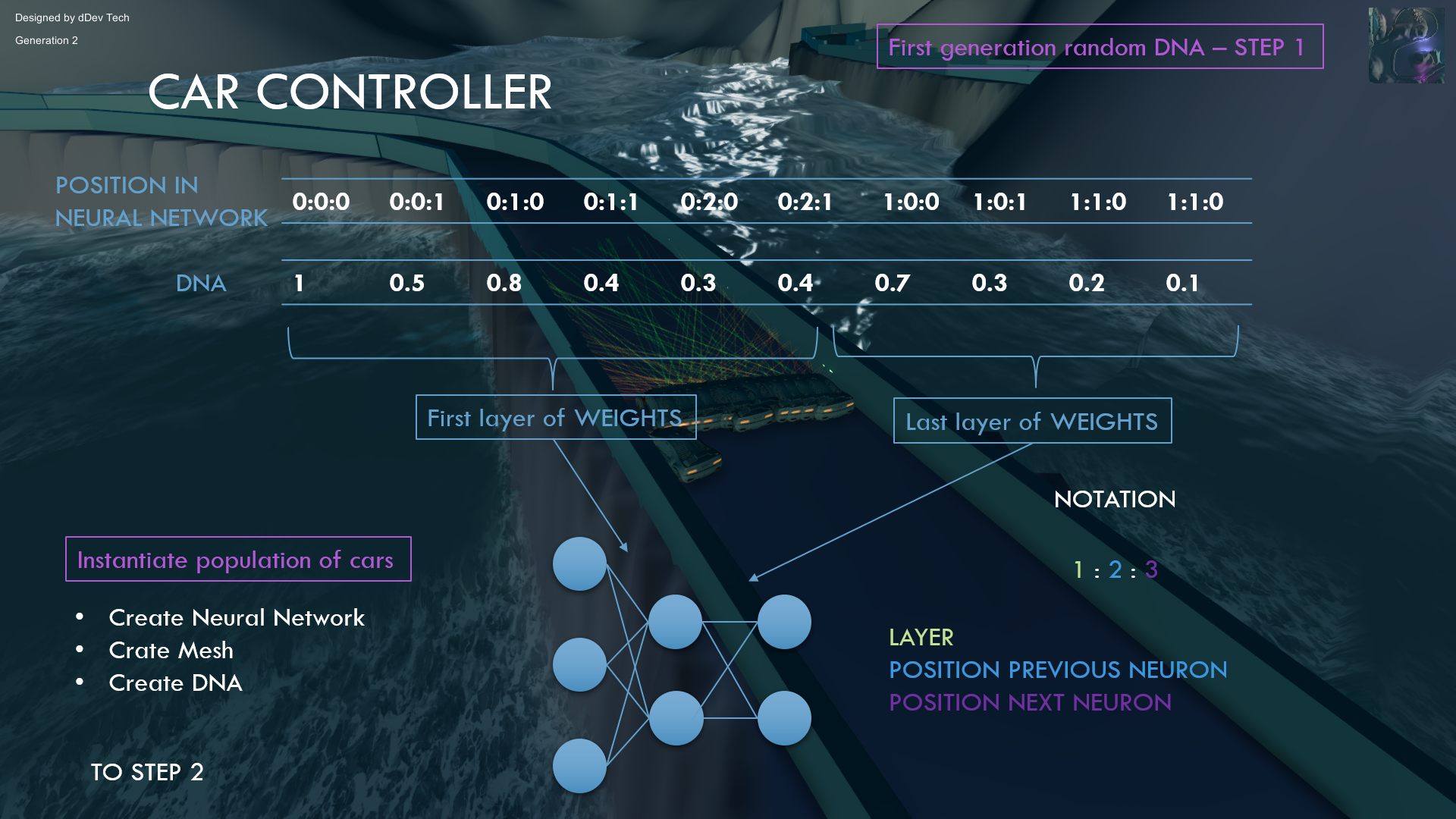

- INITIALIZATION

The initialization of the DNAs in a random way will be executed only in the first generation when the Neural Networks of the cars are created. We will create the DNA as a List of length the amount of weights in Neural Network. We will create genes in the interval of [-1,1]

We will create different random DNAs for each car. Then we will assign this DNA to the weights of the Neural Networks of the cars.

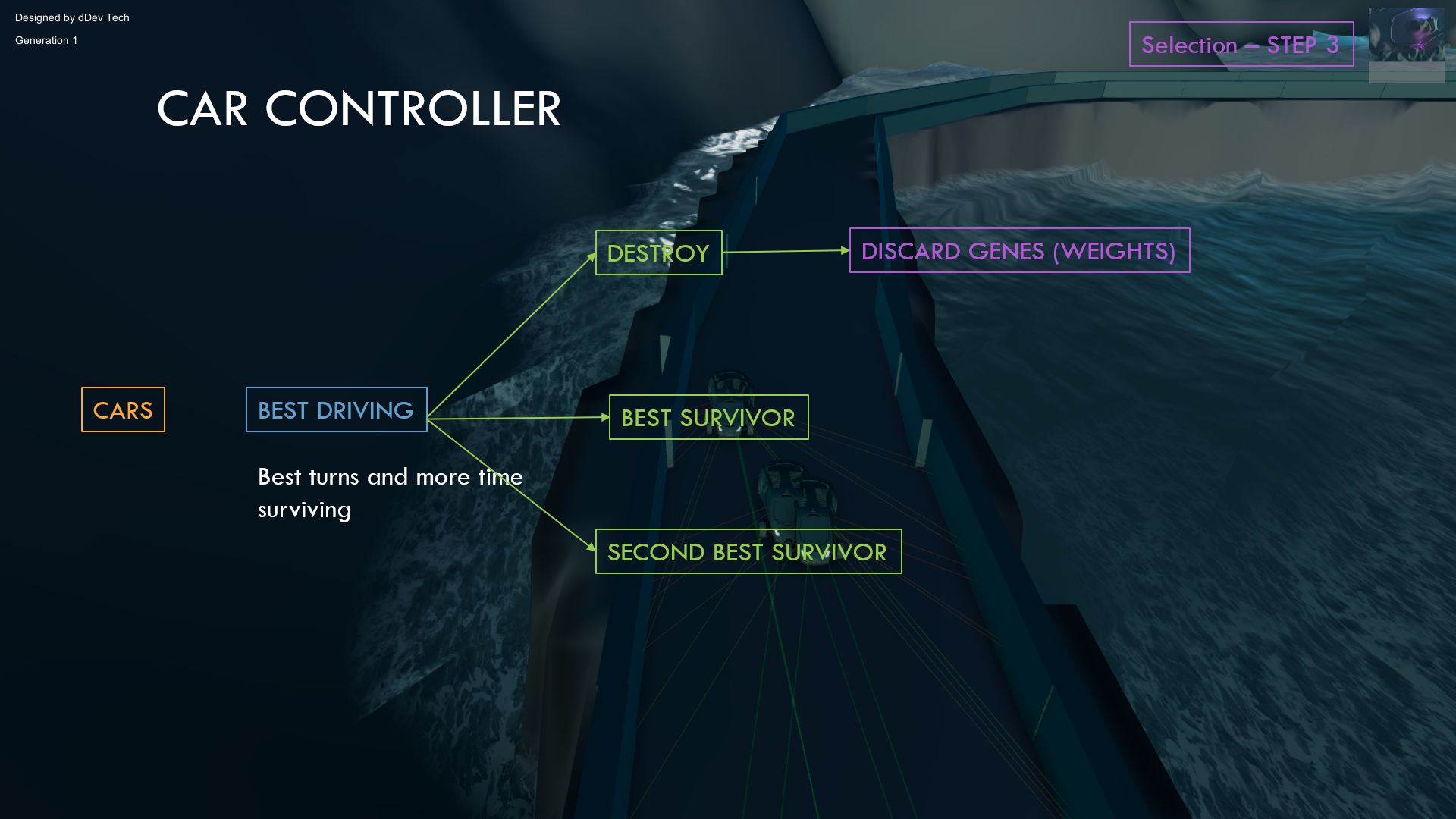

- SELECTION

When the simulator finished because all the cars have collided with a wall, we should choose which have the best genes. You can do this in a lot of different ways. Depending on the distance traveled, the average speed or the time driving. I choosed for this project the time of the car driving, so we will ge the genes for the first and second car that survive more time in the track.

All this factors could be joined to obtain a better selection of parents. It can depend also on the diversity we want to achieve.

We could create a function that depends on the factors mentioned before in order to get a specific score for each car called fitness

- CROSSOVER

With the parents selected we will swap the genes to create a new DNA with a mix of both parents prefering random fragments from one parent and the other. This random value could be modificated depending if the parents have very different fitness or very similar. As they a similar fitness score they will have more similar probability to be chosen the genes.

You can see here and example of the genes being manipulated:

- MUTATION

When we create for each new car for the new generation the mixture between both parents we will create different mutations. This is used because the parents are the best from the generation but not the best driving cars. We must create small mutations in the DNA of each new car to get better cars.

Fo more information about genetic algorithms see Genetic Algorithms

The tutorial of how to program the genetic algorithm and implement the genes in code is the next video:

VISUAL 3D SIMULATOR

Additionaly from the algorithms, we should create all the mesh from the cars, terrain, effects, track and camera.

Each car must be able to move and rotate. Each car will have its own lasers that raycast the distances to the track walls and output a value from 0 to 1.

The camera will behave as a drone that follows one car and will have a smooth movement when changing to other car. It will rotate the cars it is looking to

The terrain, track and effects can be choose by the creator. Nevertheless I have created a video explaining one simple terrain with a track and water:

LASERS

The neural networks must have some input data to work and to handle the car movement and rotation. In this case we will store the distances of different rays that raycast to the walls of the track. It will have some parameters such us the angle of view and the number of laser sensors.

CAR MOVEMENT

The outputs of the neural network will be the rotation and acceleration. We will need to update the car position and rotation depending on this values and the time passed in simulation. The car will have an Accelerated Linear Uniform Movement.

CAMERA

The camera will automatically move from one car to another. Depending on the cars that haven’t collided yet the camera will follow one of them. When a car collides and the camera is following it, it will change to another car in a fluent way. Also the camera will rotate slowly around the car.

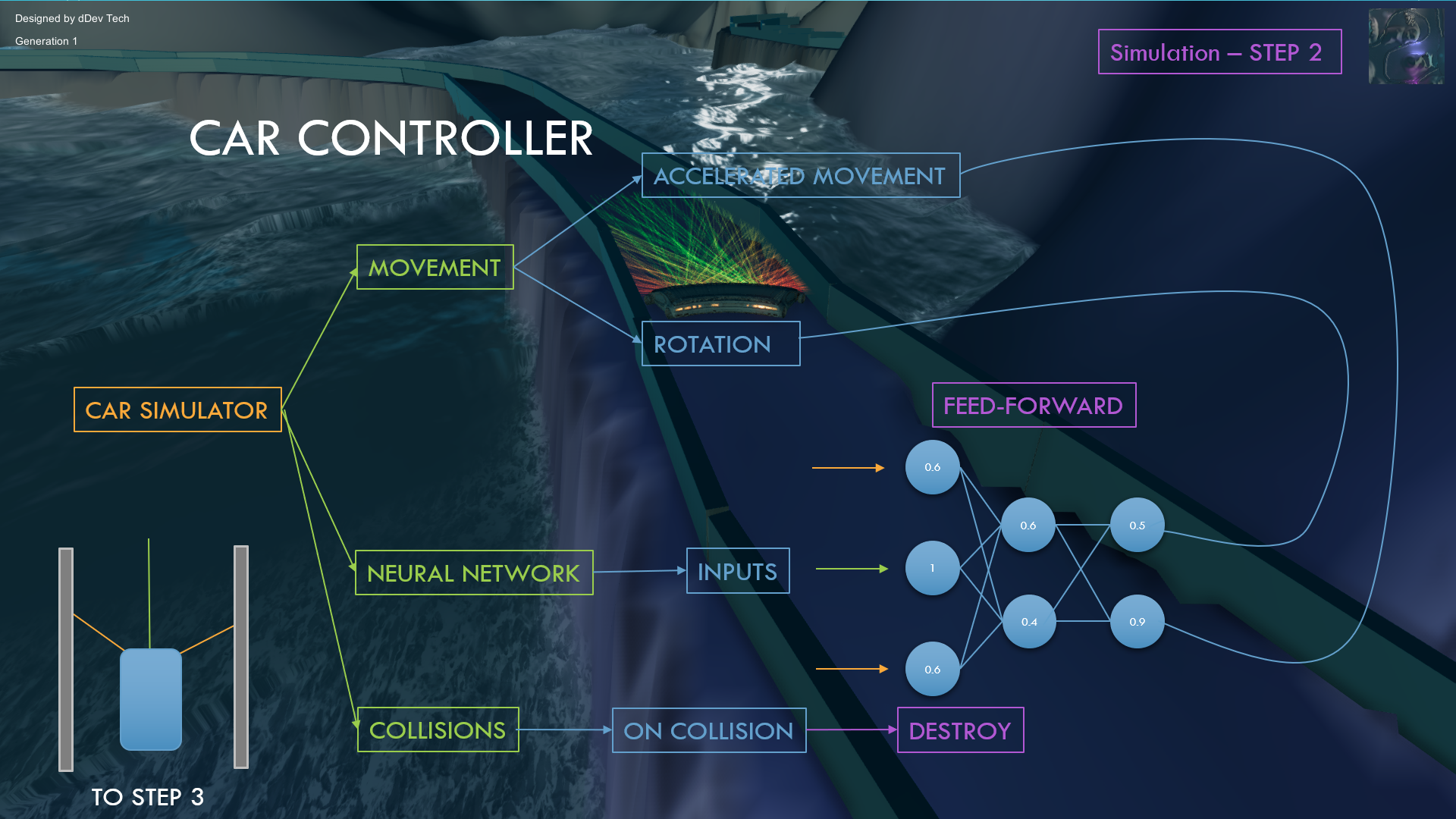

CONTROLLER

We will finally need to join and interact with all the stuff created before. We will have a class called Controller that will be in charge of controlling every object and algorithm in the simulator.

The lasers will be the inputs of the Neural Network and will be assigned to the input layer of each car with its own lasers. Then we will need to apply the feed-forward algorithm and get the outputs of the Neural Network. These outputs will be the rotation and acceleration of each car that will be used in the physics of the car. This sequence will be updated every time for every car.

If it’s the first generation the DNA of every car will be random. In the next generations the weights will be adjusted by the genetic algorithm.

The next video will be the Car Controller that is the final video where you will be finished all the code and fix some bugs for the project to work. I will also explain how the parameters work and the Car Controller will be coded.

If you like the projects you can give a comment and if you have some questions tell it to me.

The code is available here: Github Repository

Pingback: Genetic Algorithm - dDev Tech Tutorials - Retopall

Hi.

Great tutorial. Thanks for sharing.

How long did it take to learn and on what hardware?

Could you publish code on github? Or are you willing to share code?

I did try some python tensorflow i did buy hardware, but i am stucked because of low coding skills. Hope i will improve that.

Any reason why did you chose C# and not pzthon..

Thanks.

Unity 3D is a popular 3D engine and easy to use. C# is also a great programming language because is fast and easy. I learn with youtube tutorials and I do the project by myself. Python is also good for Artificial Intelligence but not for 3D. I hope it was helpful for you.

Pingback: Autonomous Driving Simulation - dDev Tech Tutorials - Retopall - dDev Tech Tutorials - Retopall

Could you publish code?

I have done it now.

This tutorial is really helpful. Could you please add how to save the weights to not restart each generation again everytime. I have attempted to save the winner and secWinner in a json but it does not work and just saves {} and the debug just says DNA. Please add a tutorial / update github as I believe many people are asking for this.

I’m preparing a new simulator where I will explain advanced topic of self driving cars including the JSON save. Anyway if want to do it with the JSON utility of Unity you will get a string of an object (class) you want to save for example the DNA and then save that string on a file and later load it

Thank you for the very fast reply. So you are telling me I need to change the DNA object ‘winner’ to a string variable then store that. then when loading I need to convert it back to DNA? Also when do you think your next set of tutorials will be uploaded because you are the most useful tutorials I have found relating to how Neural network vehicles work.

now I’m creating a new project so I will need time to publish more tutorials.

The idea is to do this string S =JsonUtility.toJson(DNA)

This string will contain all the JSON data and then you can store it on a fioe

I typed the source and created it as a video, but the camera doesn’t follow me and an error occurs.

NullReferenceException: Object reference not set to an instance of an object

Car.changeCamera () (at Assets/Car/Car.cs:67)

Car.OnTriggerEnter (UnityEngine.Collider col) (at Assets/Car/Car.cs:50)

Great project and great tutorials, BUT, it will be better if you use Python.

Most of AI, ML, DL engineers using Python. I can’t understand anything from your code because I am a data scientist and machine learning engineer and like others, I know Python and R.

Also, I suggest making your simulator ( Installable ) I mean .exe file like Udacity self-driving car simulator.

Thanks.

A lot of people has suggested me including one AI lead developer of Google when I went to visit it. The problem is that I’m more comfortable with Java and C#. However I have been learning Python and I have created also projects in Python like a finance analyzer.

Thanks for the suggestions. The driving simulator can be tested in my website https://www.retopall.con

Go to simulations – self driving car and sign in to use the editor in website with unity webgl

Thanks for the support!